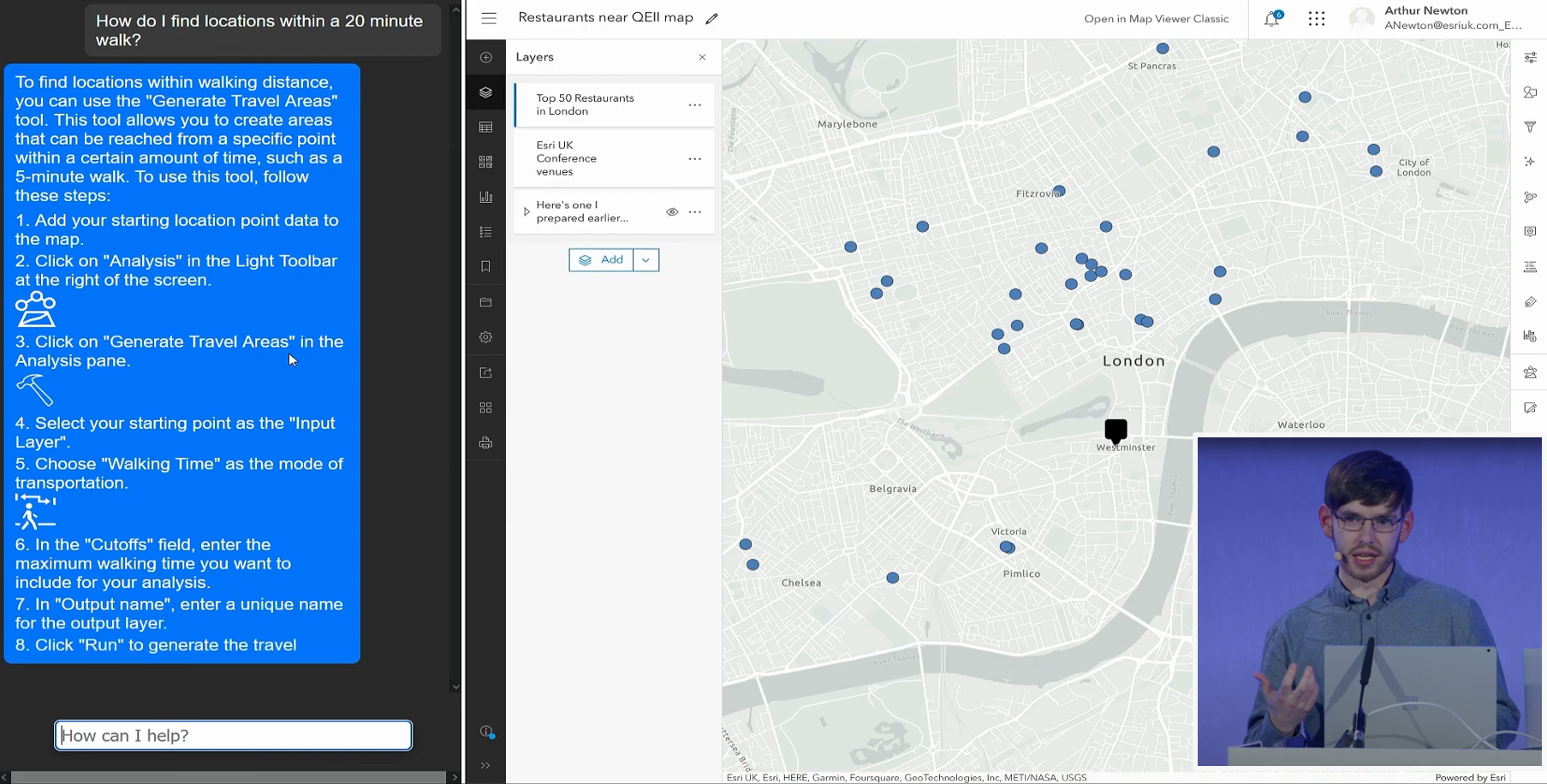

Last year, I shared a vision of generative AI in GIS at the Esri UK Annual Conference 2023 I showed how large language models like ChatGPT can extract and categorise spatial information from plain text, how AI assistants might transform how we interact with GIS, and how AI can suggest smarter mapping styles that adapt to our needs. Finally, I showed how the next evolution of AI can automate entire workflows like creating a Story Map from the information in a blog article.

This is obviously all really exciting stuff and I’m sure you now can’t wait to jump in and start using this technology for yourself. But where to start? Well, in this blog I’m going to cover the basics that you’ll need to know if you want to try using generative AI alongside your GIS.

What is Generative AI?

Let’s start with the basics. Artificial Intelligence, or AI, is the idea that computers or machines can mimic the ways the human brain perceives and synthesises information. AI is not a particularly new technology; active AI research has been going on for decades and it’s difficult to use a modern computer or smartphone without encountering AI in one form or another. However, recent advances in research, computing power and data availability have led to rapid growth in the capabilities and popularity of one type of AI in particular: Generative AI.

Generative AI is a catch-all term for any kind of AI model that can, well, generate something. Text generators and image generators are the most mature types of generative AI, but research into generating other types of information with AI is progressing rapidly. AI image generators such as Dall-E, Midjourney or Stable Diffusion typically generate images based on a text prompt, while text-generating Large Language Models (LLMs) like ChatGPT can perform a wide variety of language-based tasks and engage in shockingly natural conversations. All this is possible because these AI models have learnt patterns and associations from vast collections of training data from across the Internet.

There are a multitude of use cases for generative AI in the world of geospatial, far too many to talk about in detail.

I’m sure we’ll continue to see innovative applications of the technology popping up everywhere as we all discover how best to benefit from it. For now though, let’s focus on how to start using it.

How to get started with generative AI

Generative AI is currently experiencing somewhat of a boom in popularity, partly due to a trend towards what could be called ‘AI-as-a-Service’. A growing number of providers are offering AI capabilities hosted in the cloud and accessible over the Internet. It’s never been easier to get started in AI, just don’t forget to check your company’s policy on AI use.

The AI service I’ve spent the most time with is the one from OpenAI. OpenAI are big players in the AI-as-a-Service space in a large part because of ChatGPT, which is a freely accessible demo designed to showcase the capabilities of the company’s large language models (LLM). Although only a demo, ChatGPT is being used – for free – for all kinds of things from writing code to directing businesses. If you want to start experimenting with AI then ChatGPT is probably the most accessible place to start. I’m not sure I’d trust it with anything too critical – many users have reported it will frequently and confidently get things wrong, and it can stop working completely during busy periods – but it is remarkably capable and lots of fun to test and play around with. If you have a bit of coding knowledge and want to use the capabilities of ChatGPT in your own applications, OpenAI also offer a commercial API for their LLMs, which is what my conference demos were built on.

There are other options too. Other tech companies, including Google and Meta, are developing their own LLMs and Microsoft has begun integrating OpenAI’s models into their products. If you prefer to run things on your own hardware then some generative AI, like the Stable Diffusion image generator, are lightweight enough that they can be run locally on a reasonably powerful computer. There is also a whole world of AI research on platforms like GitHub and Google Collab if you like to stay on the bleeding edge.

Building apps on generative AI

Before we get too excited, it’s worth remembering that generative AI is still very much an emerging technology. It’s a great tool to experiment with, but it’s currently unregulated and there’s still a lot that needs to be done to validate the security and accuracy of generative AI before it’s ready to use in critical business systems.

The emerging nature of generative AI also means that, for now at least, we need some developer skills if we want to use it in our own GIS apps and workflows. OpenAI’s API can be used in many programming languages, including Python and JavaScript, so it can be readily used alongside the ArcGIS API for Python and the ArcGIS Maps SDK for JavaScript. Let’s break down a couple of example prompts to see how they work.

Example 1: generating a colour palette

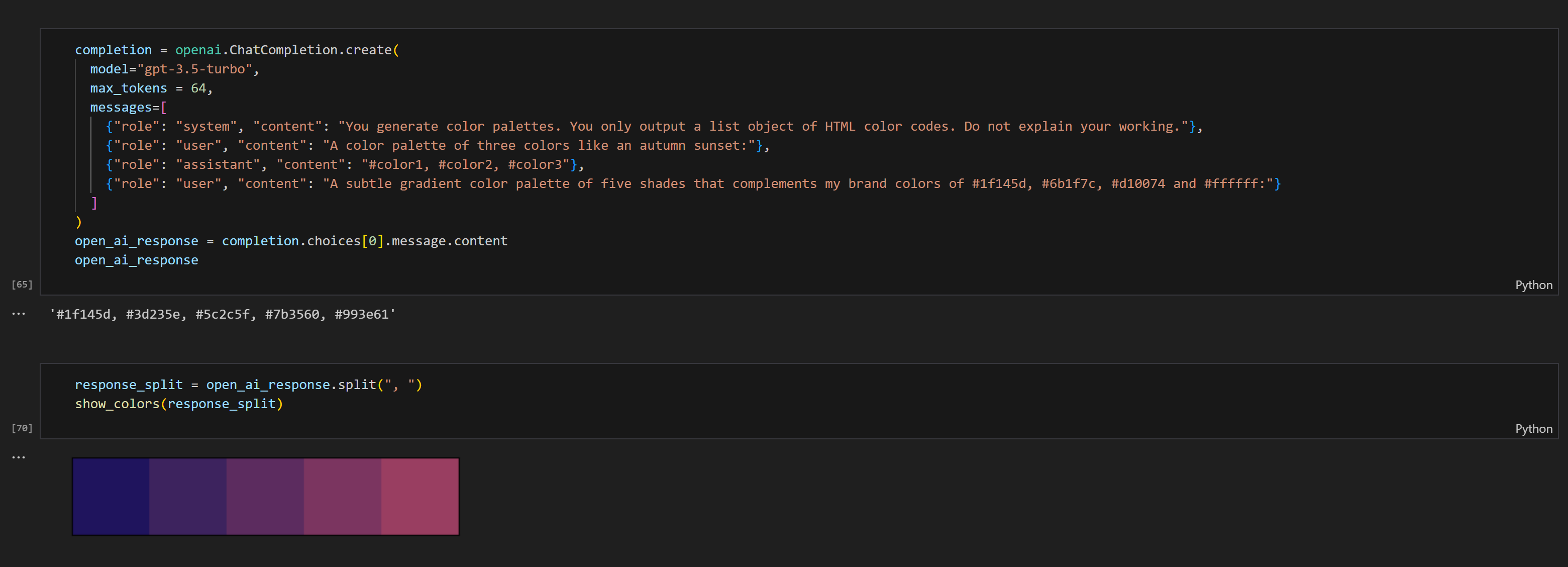

In this first example, we’ll ask the AI to generate a set of colours based on a text description that we can then use to style symbols on a map. We’ll be using the OpenAI API in Python (via the openai Python library), but the workflow for using the OpenAI API is essentially the same however we access it. If you want to learn more about using the OpenAI API, OpenAI provide plenty of guides and examples to help you get started.

If you have used ChatGPT before, you’ll be familiar with how generative AI tools work. We give the AI one or more text prompts and it generates a response. The process is essentially the same when using the OpenAI API but we get a little more control over how we prompt the AI, which helps achieve a more consistent output. Very useful if we want to use the outputs in a script.

Prompts for OpenAI’s chat models are typically formed of three parts, allowing us to provide very specific instructions. The first part is the ‘system’ message, which describes the role that the AI should take, like “You are a helpful assistant” to create a chatbot. However, for our example we need to be a little more specific and tell it to only output a list of colours as HTML colour codes, a common format for representing colours on the web.

Probably the most important part of this system message is “Do not explain your working”. Large Language Models are quite talkative and will often generate lots of descriptive text in addition to the output we actually want, unless we specify that we don’t want that.

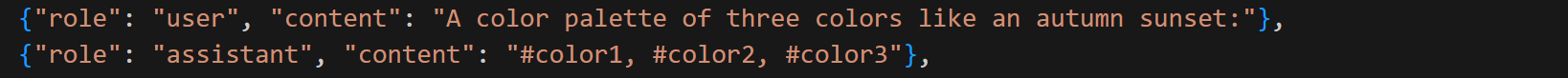

The second part of the prompt is the simulated chat. While the ChatGPT application automatically keeps a record of your current conversation for a consistent experience, the API needs to have all of this information included in the prompt itself. Messages labelled ‘user’ represent queries, comments or requests made by you the user. Messages labelled ‘assistant’ are interpreted as previous responses from the AI, which serve as examples of the kind of response we are looking for. It’s good practice to include at least one example when writing prompts for Large Language Models – this applies to ChatGPT too!

The final part of the prompt is the actual request we would like the AI to respond to. It needs to be as descriptive as possible to increase our chances of getting the result we want. Different generative AI models each have their own quirks with how they interpret instructions and there are often tricks you’ll discover as you experiment. For instance, I found this prompt worked much more reliably when any numbers in the prompt were written as ‘five’ instead of ‘5’.![]()

The response from the OpenAI API is always a JSON object. There’s lots of information included in the response, but the part we’re interested in is the message content. This can be extracted from the response like this in our Python notebook:![]()

![]()

If we visualise the list of colours using the matplotlib Python library (a helpful function for this can be found here), we get a nice colour gradient that we could then use to style a map within the notebook, apply to symbols in Map Viewer, or use to style a basemap with the Vector Tile Style Editor.

Example 2: extracting information from text

One of the things that LLMs like ChatGPT are great at is restructuring information. You can feed a paragraph of text into ChatGPT and it will be able to rewrite it into almost any style or form you choose. If you’ve ever been curious what your favourite movie quote would look like as a haiku then ChatGPT is the tool for you. Aside from being an endless source of entertainment, this particular capability of LLMs is very useful to us in the GIS world because we can use it to transform information from an unstructured form like a blog article or report into a data table that can be mapped.

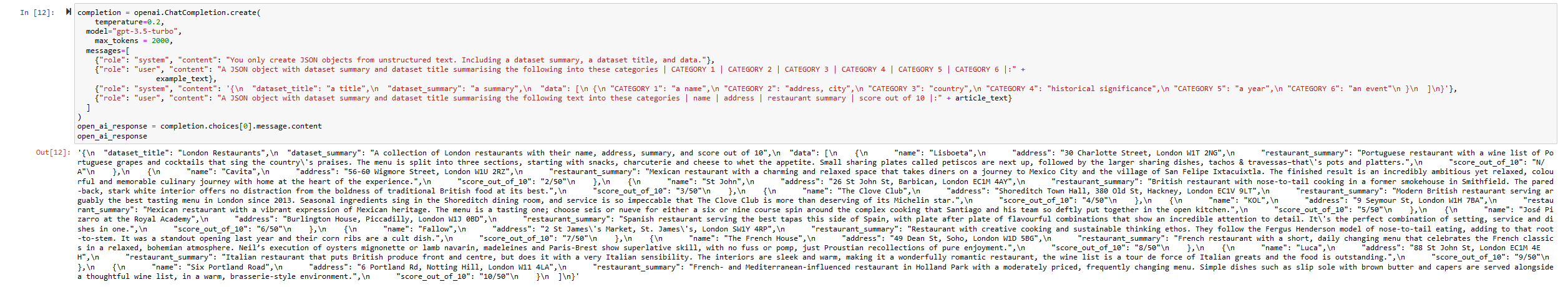

In this example, we will take a blog article on restaurants and extract the useful information into a feature layer. First we tell the AI the role that we would like it to take. In this example we only want it to output a JSON object, so we describe a role that only outputs JSON objects.

Next, we give the AI an example user request and describe the data structure we would like it to output. Here we do this with a ‘system’ message rather than an ‘assistant’ message as I’ve found that this sometimes works better. It’s worth trying if ‘assistant’ messages don’t give you a consistent result.

Finally, we give the AI the instructions we would like it to execute and give it the data we would like it to restructure. Again, telling the AI to not explain its workings reduces the likelihood the AI generates extra text we don’t want.

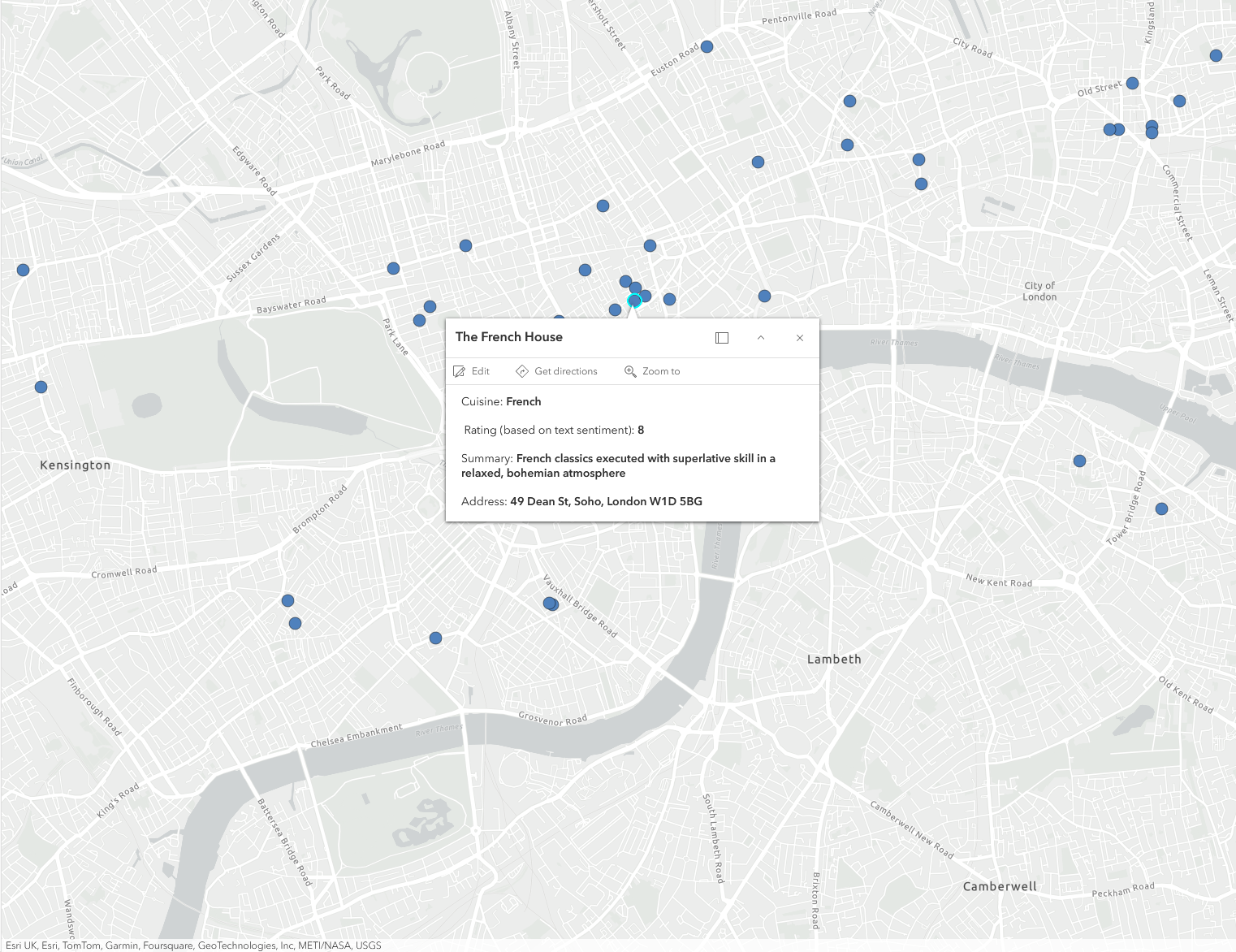

The output might look a bit of a mess, but it is in fact a nicely structured JSON object (trust me). We can process it into a table, geocode the address information and publish it to ArcGIS Online, where it can be used for all kinds of analysis and visualisations.

Not everything in our prompt worked as expected though. In the prompt we asked for a score out of 10 for each restaurant, but the AI has generated a score out of 50 instead. Admittedly, this is quite an ambitious request for the AI. There is no score out of 10 in the input text, so we want the AI to estimate a score based on the content of the text. I imagine more specific instructions would help the AI generate scores in the way we would like.

As you can hopefully see, the structure of the prompt in this example is quite similar to the one in Example 1. This is really the point I want to get across – writing prompts for generative AI, even in a developer context, is not very complex. It usually follows the same kind of structure. This is what makes generative AI such a powerful tool for accomplishing all kinds of things. I recommend diving in and experimenting to find what generative AI can do for you.

A word of warning

Generative AI is a hugely exciting technology with lots of potential, but an important thing to remember is that no AI can escape the patterns and biases in its training data. We should always think critically about information generated by AI. Can it be verified from a trusted source? What biases might exist in the training data that influenced its output? Generative AI can be (and often is) wrong and when it is it will be confidently wrong. I don’t mean to discourage you from experimenting with generative AI – far from it – but so much of the discussion on AI is around what it can do, and what it will be able to do in the future, that it’s easy to forget about its shortcomings.

Final thoughts

As you’ve hopefully seen from these examples, instructing an AI isn’t all that complicated – it just requires good communication. This is really the most important breakthrough of generative AI, and why I think this technology has had such broad appeal. No longer does using AI require a computer science degree, achieving anything you desire (within reason) with generative AI is as easy as describing it in text.